The microchip, often referred to as an integrated circuit (IC), represents one of the most significant technological advancements of the 20th century. Its inception can be traced back to the late 1950s, a period marked by rapid developments in electronics and computing. The need for smaller, more efficient components arose as engineers sought to enhance the performance of electronic devices while reducing their size and cost.

Prior to the microchip, electronic circuits were constructed using discrete components such as resistors, capacitors, and transistors, which were bulky and required extensive wiring. This not only made devices larger but also increased the likelihood of failure due to the complexity of connections. The first practical microchip was developed in 1958, a pivotal moment that would lay the groundwork for modern computing.

This innovation was born out of necessity during the Cold War era, where advancements in technology were crucial for national defense and space exploration. The microchip allowed for the miniaturization of circuits, enabling engineers to pack more functionality into smaller spaces.

Key Takeaways

- The microchip was born out of the need for a smaller, more efficient alternative to vacuum tubes, leading to the development of integrated circuits in the 1950s.

- Jack Kilby and Robert Noyce were the key innovators behind the microchip, with Kilby creating the first working integrated circuit and Noyce developing the first practical microchip.

- The microchip has had a profound impact on technology, revolutionizing industries such as computing, telecommunications, and consumer electronics.

- From integrated circuits to modern processors, the evolution of the microchip has led to exponential increases in processing power and efficiency.

- The microchip revolution has changed the world by enabling advancements in fields such as artificial intelligence, medical technology, and space exploration.

The Innovators: Jack Kilby and Robert Noyce

Two key figures in the development of the microchip are Jack Kilby and Robert Noyce, whose contributions were instrumental in shaping the future of electronics. Jack Kilby, an engineer at Texas Instruments, is credited with creating the first working integrated circuit in 1958. His design utilized a single piece of semiconductor material to perform multiple functions, effectively combining several components into one compact unit.

Kilby’s innovation was not merely a technical achievement; it represented a paradigm shift in how electronic devices could be designed and manufactured. His work earned him the Nobel Prize in Physics in 2000, recognizing the profound impact of his invention on technology. On the other hand, Robert Noyce, co-founder of Intel Corporation, independently developed his version of the integrated circuit around the same time as Kilby.

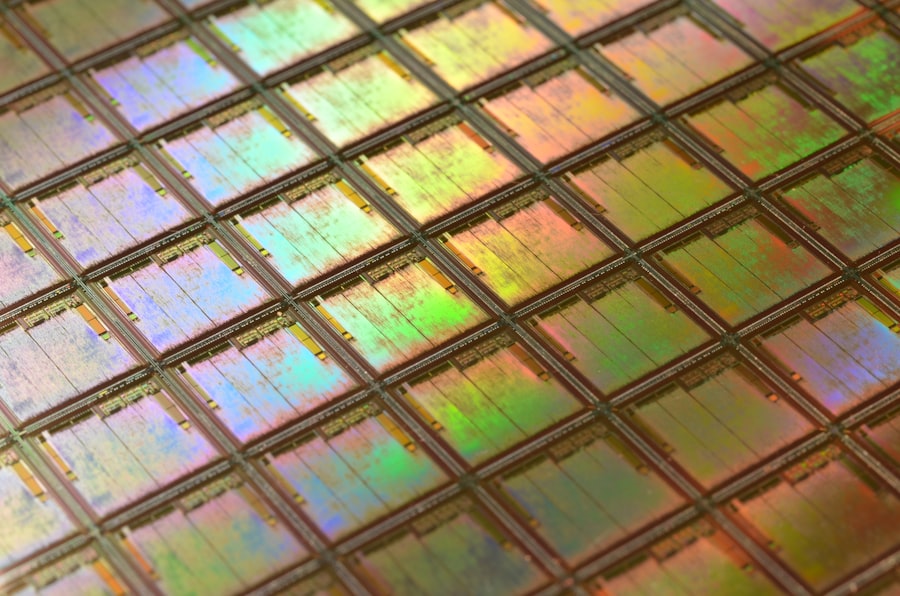

Noyce’s approach involved using silicon as a substrate, which allowed for greater efficiency and scalability in production. His work led to the creation of the planar process, a method that enabled multiple layers of circuitry to be built on a single chip. This innovation was crucial for mass production and set the stage for the rapid advancement of microchip technology.

Noyce’s contributions not only solidified his place in history but also established Intel as a leader in semiconductor manufacturing.

The Impact of the Microchip on Technology

The introduction of the microchip has had a transformative effect on technology across various sectors. In computing, microchips have enabled the development of personal computers, laptops, and servers that are exponentially more powerful than their predecessors. The ability to integrate millions of transistors onto a single chip has led to significant increases in processing speed and efficiency.

For instance, early computers relied on vacuum tubes and discrete transistors, which limited their capabilities and size. With microchips, computers became smaller, faster, and more accessible to the general public. Beyond computing, microchips have revolutionized telecommunications, automotive technology, healthcare, and consumer electronics.

In telecommunications, microchips have facilitated the development of smartphones and wireless communication technologies that connect billions of people worldwide. The automotive industry has also benefited from microchip technology through advancements in safety features, navigation systems, and electric vehicles. In healthcare, microchips are used in medical devices such as pacemakers and diagnostic equipment, improving patient outcomes and enabling remote monitoring.

The pervasive influence of microchips is evident in everyday life, underscoring their role as a cornerstone of modern technology.

The Evolution of the Microchip: From Integrated Circuits to Modern Processors

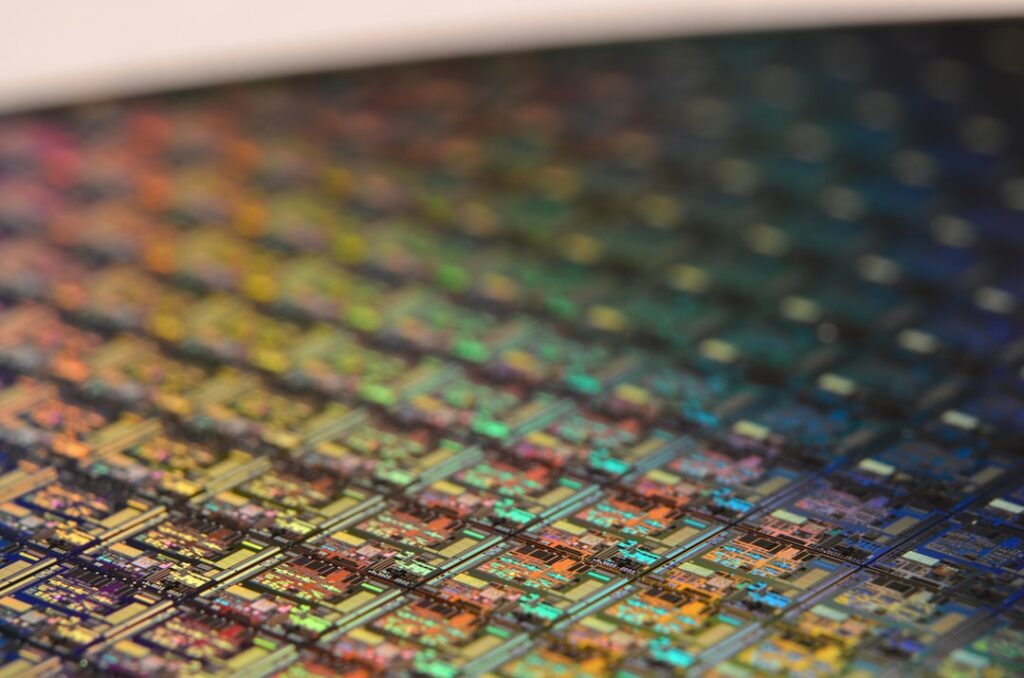

The evolution of microchips has been characterized by continuous innovation and miniaturization. Initially, integrated circuits were relatively simple devices with limited functionality. However, as technology progressed, so did the complexity and capabilities of microchips.

The introduction of complementary metal-oxide-semiconductor (CMOS) technology in the 1970s marked a significant milestone in this evolution. CMOS allowed for lower power consumption and greater integration density, enabling manufacturers to produce chips with millions of transistors. As we moved into the 21st century, advancements in fabrication techniques led to the development of modern processors that are capable of executing billions of instructions per second.

The transition from 32-bit to 64-bit architectures exemplifies this evolution, allowing for greater memory addressing and improved performance in computing tasks. Furthermore, innovations such as multi-core processors have enabled parallel processing capabilities, significantly enhancing computational power for applications ranging from gaming to scientific simulations.

The Microchip Revolution: Changing the World

The microchip revolution has fundamentally altered how we interact with technology and each other. It has democratized access to information and communication, fostering a global interconnectedness that was previously unimaginable. The proliferation of personal computers and smartphones has empowered individuals with tools for education, creativity, and entrepreneurship.

Social media platforms have emerged as powerful channels for communication and expression, reshaping societal norms and cultural dynamics. Moreover, industries have been transformed by microchip technology through automation and data analytics. Manufacturing processes have become more efficient with the integration of smart sensors and robotics powered by microchips.

In finance, algorithms running on advanced processors analyze vast amounts of data to inform investment decisions in real-time. The rise of artificial intelligence (AI) is another testament to the impact of microchips; machine learning algorithms rely heavily on powerful processors to analyze data patterns and make predictions. This revolution has not only changed industries but has also raised ethical questions about privacy, security, and the future of work.

Challenges and Controversies in the Development of the Microchip

Despite its many benefits, the development of microchip technology has not been without challenges and controversies. One significant issue is the environmental impact associated with semiconductor manufacturing. The production process involves hazardous chemicals and generates substantial electronic waste.

As demand for microchips continues to grow—exacerbated by trends such as IoT (Internet of Things) devices—the environmental footprint becomes increasingly concerning. Efforts are being made within the industry to adopt more sustainable practices; however, balancing innovation with environmental responsibility remains a complex challenge. Another area of contention is related to security vulnerabilities inherent in microchip design.

As devices become more interconnected through networks, they become potential targets for cyberattacks. High-profile incidents involving compromised chips have raised alarms about supply chain security and data integrity. Additionally, geopolitical tensions have led to concerns over reliance on foreign semiconductor manufacturers for critical technologies.

Countries are now investing heavily in domestic semiconductor production capabilities to mitigate risks associated with supply chain disruptions.

The Legacy of Kilby and Noyce: Their Influence on the Tech Industry

The legacies of Jack Kilby and Robert Noyce extend far beyond their individual contributions to microchip technology; they have shaped an entire industry characterized by innovation and entrepreneurship. Their pioneering work laid the foundation for companies like Texas Instruments and Intel to emerge as leaders in semiconductor manufacturing. Their influence can be seen in the culture of innovation that permeates Silicon Valley and other tech hubs around the world.

Kilby’s emphasis on practical engineering solutions inspired generations of engineers to pursue careers in electronics and computer science. His work ethic and commitment to problem-solving continue to resonate within educational institutions that train future innovators. Similarly, Noyce’s vision for scalable semiconductor production has driven advancements in manufacturing processes that allow for rapid prototyping and iteration—key elements in today’s fast-paced tech landscape.

Both innovators exemplified how creativity combined with technical expertise can lead to groundbreaking advancements that change lives.

The Future of the Microchip: Advancements and Innovations

Looking ahead, the future of microchip technology promises exciting advancements driven by ongoing research and development efforts. One area garnering significant attention is quantum computing, which leverages quantum bits (qubits) to perform calculations at speeds unattainable by classical computers. Companies like IBM and Google are at the forefront of this field, exploring how quantum processors can solve complex problems ranging from cryptography to drug discovery.

Additionally, neuromorphic computing is emerging as a promising frontier that seeks to mimic human brain functions through specialized chip architectures designed for artificial intelligence applications. These chips aim to process information more efficiently by emulating neural networks found in biological systems. As AI continues to evolve, neuromorphic chips could play a crucial role in enabling machines to learn and adapt more like humans.

Furthermore, advancements in materials science may lead to new types of semiconductors that outperform traditional silicon-based chips. Research into materials such as graphene or gallium nitride holds potential for creating faster and more energy-efficient processors that could revolutionize everything from consumer electronics to renewable energy systems. In summary, while challenges remain in terms of sustainability and security within the microchip industry, ongoing innovations promise a future where microchips continue to drive technological progress across various domains.

If you’re interested in learning more about the history of technology and innovation, you may want to check out an article on hellread.com titled “Hello World: A Brief History of Computer Programming.” This article delves into the evolution of computer programming languages and their impact on the development of modern technology. It provides a fascinating look at how programming has shaped the world we live in today, much like how the invention of the microchip revolutionized the way we use technology.

FAQs

What is the book “The Chip: How Two Americans Invented the Microchip and Launched a Revolution” about?

The book “The Chip” by T.R. Reid tells the story of the invention of the microchip by two Americans, Jack Kilby and Robert Noyce, and how their invention revolutionized the world of technology.

Who are the main characters in the book?

The main characters in the book are Jack Kilby and Robert Noyce, the two American engineers who independently invented the microchip in the late 1950s and early 1960s.

What impact did the invention of the microchip have on the world?

The invention of the microchip revolutionized the world of technology by making it possible to miniaturize electronic components and create the foundation for modern computers, smartphones, and other electronic devices.

What is the significance of the microchip in the history of technology?

The microchip is considered one of the most important inventions in the history of technology, as it enabled the development of smaller, faster, and more powerful electronic devices that have transformed the way we live and work.

What is the author’s background and expertise in the subject?

T.R. Reid is a journalist and author known for his expertise in technology and innovation. He has written extensively on the subject of technology and its impact on society, and has a deep understanding of the history and significance of the microchip.