The landscape of data warehousing has been significantly shaped by the contributions of Ralph Kimball, a pioneer in the field whose methodologies have become foundational for organizations seeking to harness the power of data. Kimball’s Data Warehouse Toolkit series, particularly the original volume published in 1996, has served as a cornerstone for practitioners and scholars alike. This seminal work introduced a structured approach to data warehousing that emphasized the importance of dimensional modeling and user-centric design.

By focusing on how end-users interact with data, Kimball’s methodologies have enabled organizations to create systems that are not only efficient but also intuitive and accessible. The toolkit has evolved over the years, with subsequent editions and volumes addressing emerging challenges and technologies in the data warehousing domain.

The principles laid out in these texts have been instrumental in guiding organizations through the complexities of data integration, storage, and retrieval, making them indispensable resources for data professionals. As businesses increasingly rely on data-driven decision-making, understanding Kimball’s methodologies becomes crucial for anyone involved in the design and implementation of data warehousing solutions.

Key Takeaways

- Kimball’s Data Warehouse Toolkit Classics are essential resources for understanding data warehousing and dimensional modeling.

- Data warehousing has evolved over time, from simple data storage to a comprehensive approach for organizing and analyzing data.

- The Kimball Methodology provides a comprehensive approach to data warehousing, including techniques for designing, developing, and maintaining a data warehouse.

- Dimensional modeling is a key concept in data warehousing, focusing on organizing data into dimensions and facts for easy analysis.

- ETL (Extract, Transform, Load) plays a crucial role in data warehousing, ensuring that data is extracted from source systems, transformed to fit the data warehouse schema, and loaded into the data warehouse.

The Evolution of Data Warehousing

Data warehousing has undergone a remarkable transformation since its inception in the late 1980s. Initially, organizations relied on traditional databases to store operational data, which often led to inefficiencies when it came to reporting and analysis. The need for a more structured approach to data management became apparent as businesses sought to leverage their data for strategic insights.

This led to the development of the first data warehouses, which were designed to consolidate data from various sources into a single repository optimized for query performance. As technology advanced, so too did the capabilities of data warehousing solutions. The introduction of online analytical processing (OLAP) allowed for more sophisticated analysis of multidimensional data, enabling users to slice and dice information in ways that were previously unimaginable.

The rise of big data further complicated the landscape, as organizations began to grapple with vast amounts of unstructured data generated from various sources such as social media, IoT devices, and web interactions. This evolution necessitated new approaches to data warehousing that could accommodate both structured and unstructured data while maintaining performance and scalability.

The Kimball Methodology: A Comprehensive Approach to Data Warehousing

At the heart of Kimball’s methodology is the concept of building a data warehouse that is both user-friendly and efficient. This approach is often referred to as the “dimensional approach,” which emphasizes the importance of organizing data into dimensions and facts. Dimensions are descriptive attributes related to the facts, which are quantitative measurements that users want to analyze.

By structuring data in this way, organizations can create intuitive models that align closely with business processes and user needs. One of the key tenets of Kimball’s methodology is the idea of “bus architecture,” which promotes a standardized approach to integrating data from various sources. This architecture allows for consistency across different subject areas within the data warehouse, making it easier for users to navigate and analyze information.

Additionally, Kimball advocates for an iterative development process, where organizations can gradually build out their data warehouse capabilities over time. This flexibility allows businesses to adapt to changing requirements and incorporate new data sources as they emerge.

Understanding Dimensional Modeling

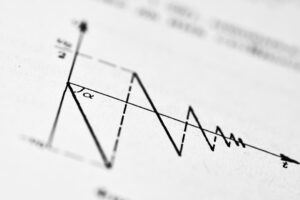

Dimensional modeling is a critical component of Kimball’s approach, providing a framework for organizing data in a way that enhances usability and performance. At its core, dimensional modeling involves creating star schemas or snowflake schemas that represent the relationships between facts and dimensions. A star schema consists of a central fact table surrounded by dimension tables, while a snowflake schema normalizes dimension tables into related sub-tables.

Both structures aim to simplify complex queries and improve query performance by reducing the number of joins required. For example, consider a retail organization that wants to analyze sales data. In a star schema, the fact table might contain sales transactions with measures such as revenue and quantity sold, while dimension tables could include attributes like product details, customer demographics, and time periods.

This structure allows users to easily generate reports that answer questions such as “What were our top-selling products last quarter?” or “How did sales vary by region?

The Role of ETL in Data Warehousing

Extract, Transform, Load (ETL) processes are fundamental to the functioning of any data warehouse. ETL involves extracting data from various source systems, transforming it into a suitable format for analysis, and loading it into the data warehouse. This process is crucial for ensuring that the data within the warehouse is accurate, consistent, and up-to-date.

In Kimball’s methodology, ETL is not merely a technical task; it is an integral part of the overall design and architecture of the data warehouse. The extraction phase involves gathering data from disparate sources such as transactional databases, flat files, or external APIs. Once extracted, the transformation phase applies business rules to cleanse and enrich the data.

This may include tasks such as filtering out duplicates, converting data types, or aggregating values. Finally, during the loading phase, the transformed data is inserted into the appropriate tables within the data warehouse. Effective ETL processes are essential for maintaining data integrity and ensuring that users have access to reliable information for decision-making.

Best Practices for Data Warehouse Design

Designing an effective data warehouse requires careful consideration of several best practices that can enhance performance and usability. One fundamental principle is to prioritize user requirements throughout the design process. Engaging with end-users early on helps ensure that the data warehouse aligns with their needs and expectations.

This user-centric approach can lead to higher adoption rates and more meaningful insights derived from the data. Another best practice is to implement a robust metadata management strategy. Metadata provides context about the data stored within the warehouse, including definitions, lineage, and usage statistics.

By maintaining comprehensive metadata documentation, organizations can improve data governance and facilitate better communication among stakeholders. Additionally, adopting a modular design can enhance flexibility; by creating separate modules for different business areas or functions, organizations can scale their data warehousing efforts more effectively without disrupting existing systems.

Implementing Kimball’s Data Warehouse Toolkit in Your Organization

Implementing Kimball’s Data Warehouse Toolkit within an organization involves several key steps that require collaboration across various teams. First and foremost is conducting a thorough assessment of existing systems and identifying business requirements. This assessment should involve stakeholders from different departments to ensure that all perspectives are considered when designing the new system.

Once requirements are gathered, organizations can begin developing a high-level architecture based on Kimball’s principles. This includes defining fact and dimension tables, establishing ETL processes, and determining how users will access and interact with the data warehouse. It is also essential to establish governance frameworks that outline roles and responsibilities related to data management and quality assurance.

Training is another critical aspect of successful implementation. Providing comprehensive training sessions for end-users ensures they understand how to navigate the new system effectively and leverage its capabilities for their analytical needs. Continuous feedback loops should be established post-implementation to refine processes and address any challenges that arise as users begin interacting with the new system.

The Future of Data Warehousing: Trends and Innovations

As technology continues to evolve at an unprecedented pace, so too does the field of data warehousing. One significant trend is the increasing adoption of cloud-based solutions that offer scalability and flexibility beyond traditional on-premises systems. Cloud platforms enable organizations to store vast amounts of data without incurring significant infrastructure costs while providing access to advanced analytics tools powered by artificial intelligence (AI) and machine learning (ML).

Another innovation reshaping the landscape is real-time analytics capabilities facilitated by streaming technologies. Organizations are now able to analyze data as it is generated rather than relying solely on batch processing methods. This shift allows businesses to respond more quickly to changing market conditions or customer behaviors, enhancing their competitive edge.

Furthermore, there is a growing emphasis on integrating machine learning models directly into data warehousing environments. By embedding predictive analytics capabilities within the warehouse itself, organizations can derive insights from historical trends while simultaneously forecasting future outcomes based on current data patterns. In conclusion, as we look ahead at the future of data warehousing, it is clear that embracing these trends will be essential for organizations seeking to remain competitive in an increasingly data-driven world.

The principles established by Ralph Kimball continue to provide a solid foundation upon which these innovations can be built, ensuring that businesses can effectively leverage their data assets for strategic advantage.

If you are interested in learning more about data warehousing and business intelligence, you may want to check out the article “Hello World” on Hellread.com. This article discusses the basics of programming and how it relates to data analysis, which can be a valuable skill when working with data warehouses like those described in Kimball’s Data Warehouse Toolkit Classics By Ralph Kimball and Margy Ross. You can read the article here.

FAQs

What is Kimball’s Data Warehouse Toolkit Classics?

Kimball’s Data Warehouse Toolkit Classics is a collection of books written by Ralph Kimball and Margy Ross that provide comprehensive guidance on building and maintaining data warehouses and business intelligence systems.

What topics are covered in Kimball’s Data Warehouse Toolkit Classics?

The books cover a wide range of topics related to data warehousing, including dimensional modeling, ETL (extract, transform, load) processes, data warehouse architecture, and best practices for designing and implementing data warehouses.

Who are the authors of Kimball’s Data Warehouse Toolkit Classics?

The authors of Kimball’s Data Warehouse Toolkit Classics are Ralph Kimball and Margy Ross, who are both well-known experts in the field of data warehousing and business intelligence.

What is dimensional modeling?

Dimensional modeling is a data modeling technique used in data warehousing to organize and structure data for easy and efficient querying and analysis. It involves organizing data into dimensions (such as time, geography, and product) and facts (such as sales and inventory) to provide a clear and intuitive view of business data.

What is ETL?

ETL stands for extract, transform, load, and refers to the processes involved in extracting data from source systems, transforming it into a format suitable for analysis, and loading it into a data warehouse or other target system. ETL is a critical component of data warehousing and business intelligence systems.