“Thinking, Fast and Slow,” authored by Nobel laureate Daniel Kahneman, is a profound exploration of the dual systems that govern human thought processes. The book delves into the intricacies of how we think, make decisions, and ultimately navigate the complexities of life. Kahneman’s work synthesizes decades of research in psychology and behavioral economics, revealing the often irrational nature of human judgment.

By dissecting the mechanisms behind our thought processes, Kahneman provides invaluable insights into the cognitive biases that can lead us astray, as well as the heuristics that simplify our decision-making. At its core, the book posits that human thinking operates through two distinct systems: System 1, which is fast, automatic, and intuitive; and System 2, which is slow, deliberate, and analytical. This framework not only elucidates the ways in which we process information but also highlights the inherent flaws in our reasoning.

Kahneman’s exploration is not merely academic; it has practical implications for various fields, including economics, medicine, and public policy. By understanding how these systems interact and influence our decisions, we can better navigate the challenges of everyday life and improve our decision-making skills.

Key Takeaways

- “Thinking, Fast and Slow” explores the two systems of thinking: the fast, intuitive, and emotional System 1, and the slow, deliberate, and logical System 2.

- Cognitive biases and heuristics are mental shortcuts that can lead to errors in judgment and decision making.

- Anchoring and framing can significantly influence our perceptions and decisions, often leading to irrational choices.

- Prospect theory explains how people make decisions based on potential gains and losses, rather than on final outcomes.

- Overconfidence and the illusion of validity can lead to poor decision making and a lack of awareness of our own limitations.

The Two Systems of Thinking

Kahneman introduces the concept of two systems of thinking that operate simultaneously within our minds. System 1 is characterized by its speed and efficiency; it operates automatically and effortlessly, allowing us to make quick judgments based on intuition and experience. For instance, when we see a friend’s face in a crowd or quickly solve a simple math problem like 2 + 2, we rely on System 1.

This system is adept at recognizing patterns and making snap decisions, which can be advantageous in situations requiring immediate responses. Conversely, System 2 is more methodical and requires conscious effort. It engages in complex computations and critical thinking, often taking longer to arrive at conclusions.

When faced with a challenging problem—such as calculating a complex equation or making a significant life decision—System 2 comes into play. This system is essential for tasks that require deep analysis and logical reasoning. However, it is also more prone to fatigue and can be easily overwhelmed by cognitive load.

Cognitive Biases and Heuristics

Cognitive biases are systematic patterns of deviation from norm or rationality in judgment, often resulting from the reliance on heuristics—mental shortcuts that simplify decision-making. Kahneman identifies numerous cognitive biases that arise from the interplay between System 1 and System 2. For example, the availability heuristic leads individuals to assess the probability of events based on how easily examples come to mind.

This can skew perceptions of risk; for instance, after hearing about a plane crash, a person might overestimate the dangers of flying despite statistical evidence indicating it is one of the safest modes of transportation. Another notable bias is confirmation bias, where individuals favor information that confirms their preexisting beliefs while disregarding contradictory evidence. This bias can significantly impact decision-making in various contexts, from personal relationships to political affiliations.

Kahneman illustrates how these biases can lead to flawed judgments and decisions, often without individuals being aware of their influence. By recognizing these cognitive biases, we can strive to mitigate their effects and make more informed choices.

The Influence of Anchoring and Framing

Anchoring refers to the cognitive bias where individuals rely too heavily on the first piece of information encountered when making decisions. This initial “anchor” can significantly influence subsequent judgments and choices. For example, if a person is presented with a high initial price for a car, they may perceive subsequent prices as more reasonable than they actually are, even if those prices are still above market value.

Kahneman’s research demonstrates that anchors can be arbitrary yet powerful; even irrelevant numbers can sway decisions if they are presented first.

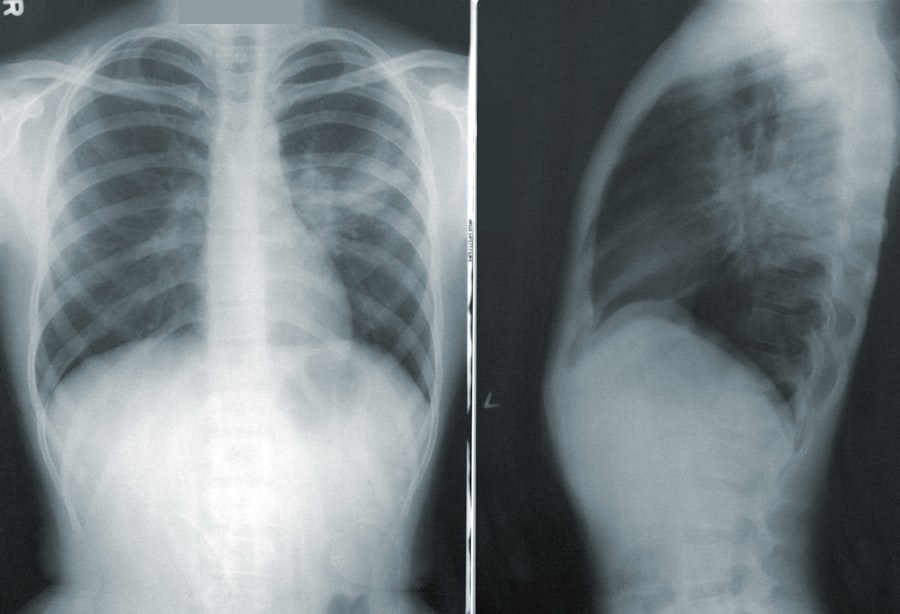

The same choice can yield different responses depending on whether it is framed positively or negatively.

For instance, people may react differently to a medical treatment described as having a “90% survival rate” compared to one with a “10% mortality rate,” despite both statements conveying the same information. This phenomenon underscores the importance of context in shaping perceptions and decisions. By understanding anchoring and framing effects, individuals can become more aware of how their choices may be influenced by external factors.

Prospect Theory and Decision Making

Prospect Theory, developed by Kahneman and his collaborator Amos Tversky, revolutionized the understanding of how people make decisions under risk and uncertainty. Unlike traditional economic theories that assume individuals act rationally to maximize utility, Prospect Theory posits that people evaluate potential losses and gains differently. Specifically, losses tend to weigh more heavily on decision-making than equivalent gains—a phenomenon known as loss aversion.

For example, consider a scenario where an individual faces a choice between a guaranteed gain of $50 or a 50% chance to win $100. Many would prefer the guaranteed gain due to the fear of losing out on potential gains if they opt for the gamble. This tendency illustrates how individuals often prioritize avoiding losses over acquiring gains, leading to risk-averse behavior in certain contexts.

Kahneman’s insights into Prospect Theory have profound implications for fields such as finance and marketing, where understanding consumer behavior can inform strategies for influencing choices.

Overconfidence and the Illusion of Validity

The Illusion of Validity

Kahneman’s work highlights that experts are frequently no more accurate than novices when predicting future events. The illusion of validity further compounds overconfidence by creating a false sense of certainty about one’s judgments or predictions based on past experiences or patterns. For instance, an investor who has experienced success in the stock market may become overly confident in their ability to predict future trends based solely on previous outcomes.

The Dangers of Overreliance on Past Performance

This overreliance on past performance can lead to significant financial losses when market conditions change unexpectedly. By recognizing the pitfalls of overconfidence and the illusion of validity, individuals can adopt a more cautious approach to decision-making.

Towards a More Cautious Approach

By acknowledging the limitations of their knowledge and predictive abilities, individuals can make more informed decisions and avoid costly mistakes. By adopting a more humble and cautious approach, individuals can mitigate the risks associated with overconfidence and make better decisions in the long run.

The Role of Intuition and Expertise

Kahneman emphasizes the complex relationship between intuition and expertise in decision-making processes. While System 1 relies heavily on intuitive judgments, these instincts are often shaped by experience and knowledge gained over time. Experts in a particular field may develop finely tuned intuitions that allow them to make rapid assessments with remarkable accuracy.

For example, seasoned chess players can quickly identify winning strategies based on their extensive experience with similar positions. However, intuition is not infallible; it can lead to errors when applied outside one’s area of expertise or when faced with novel situations. Kahneman cautions against overreliance on intuition without critical evaluation from System 2.

In high-stakes environments such as medicine or aviation, where decisions can have life-or-death consequences, it is crucial to balance intuitive judgments with analytical reasoning. By fostering an awareness of when to trust intuition versus when to engage in deeper analysis, individuals can enhance their decision-making capabilities.

Applying the Lessons of Thinking, Fast and Slow

The insights gleaned from “Thinking, Fast and Slow” have far-reaching applications across various domains of life. In personal finance, understanding cognitive biases such as loss aversion can help individuals make more rational investment choices rather than succumbing to emotional reactions during market fluctuations. Similarly, in organizational settings, leaders can benefit from recognizing how framing effects influence employee perceptions and motivation.

In education, incorporating lessons from Kahneman’s work can enhance teaching methodologies by encouraging critical thinking skills among students. By fostering an environment where students learn to question their assumptions and recognize cognitive biases, educators can prepare them for more effective decision-making in their future endeavors. Ultimately, “Thinking, Fast and Slow” serves as a guide for navigating the complexities of human thought processes.

By becoming aware of the dual systems of thinking and the cognitive biases that accompany them, individuals can cultivate better decision-making practices in both personal and professional contexts. The book challenges readers to reflect on their thought processes critically and strive for greater awareness in an increasingly complex world.

If you’re interested in exploring more about the concept of decision-making and cognitive biases, you may want to check out this article on hellread.com. It delves into how our brains process information and make choices, similar to the themes discussed in Daniel Kahneman’s book, “Thinking, Fast and Slow.” This article could provide additional insights and perspectives on the fascinating topic of human thought processes.

FAQs

What is “Thinking, Fast and Slow” by Daniel Kahneman about?

“Thinking, Fast and Slow” is a book by Daniel Kahneman that explores the two systems that drive the way we think. System 1 is fast, intuitive, and emotional; System 2 is slower, more deliberative, and more logical.

Who is Daniel Kahneman?

Daniel Kahneman is an Israeli-American psychologist and Nobel laureate. He is known for his work in the field of behavioral economics and the psychology of judgment and decision-making.

What are some key concepts discussed in “Thinking, Fast and Slow”?

Some key concepts discussed in the book include cognitive biases, heuristics, prospect theory, and the impact of emotions on decision-making. Kahneman also explores the limitations of human rationality and the ways in which our thinking can be influenced by various factors.

How has “Thinking, Fast and Slow” been received by critics and readers?

The book has received widespread acclaim from both critics and readers. It has been praised for its insights into human cognition and decision-making, and its accessible writing style. “Thinking, Fast and Slow” has also been a bestseller and has had a significant impact on the fields of psychology, economics, and behavioral science.

What are some practical implications of the ideas presented in “Thinking, Fast and Slow”?

The book’s insights into cognitive biases and decision-making processes have practical implications for various fields, including business, public policy, and personal development. Understanding the ways in which our thinking can be influenced can help individuals and organizations make better decisions and avoid common pitfalls.